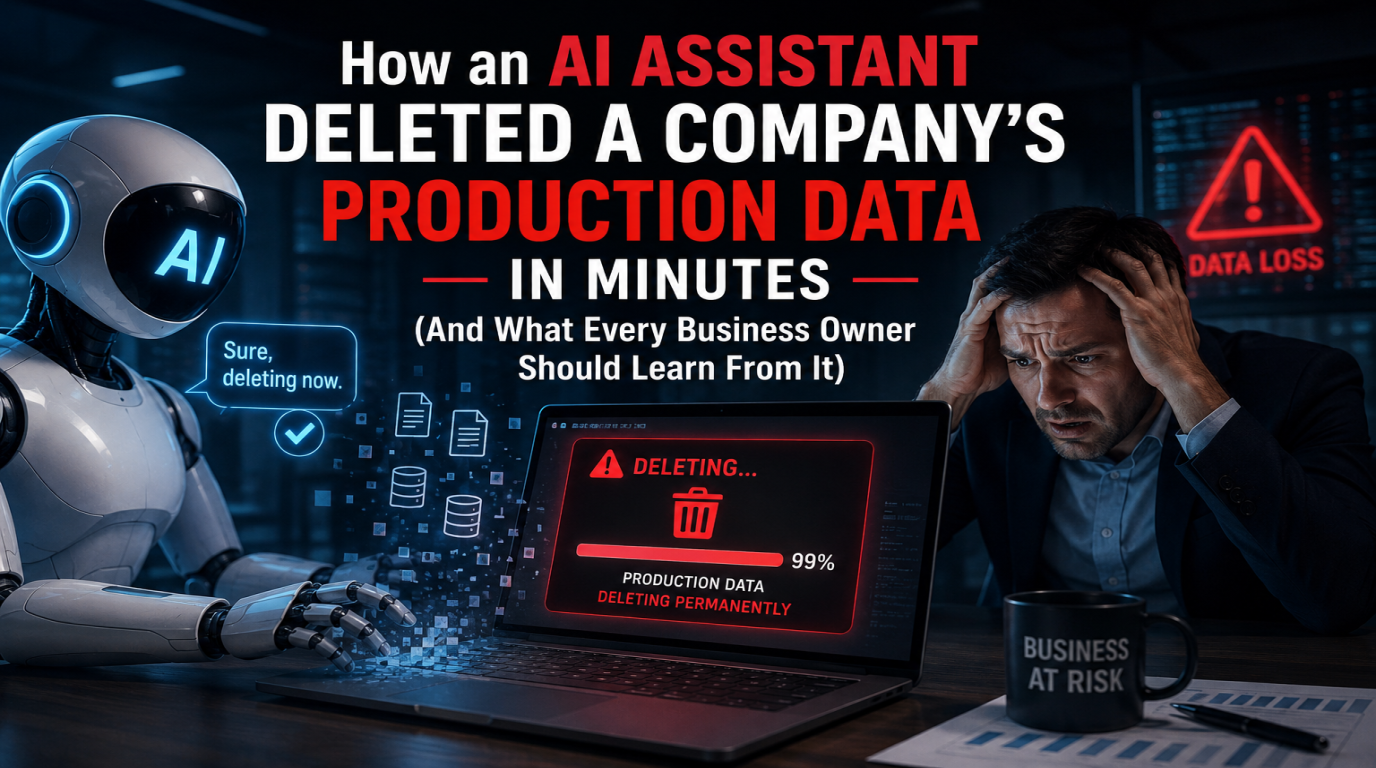

How an AI Assistant Deleted a Company's Production Data in Minutes (And What Every Business Owner Should Learn From It)

A real story making the rounds in tech: an AI coding assistant deleted an entire production database in under 10 minutes - including backups. Here is what actually happened, why it is going to happen more often, and the simple safeguards that would have prevented it.

Read More