In modern software development, speed, quality, and scalability are critical. Organisations are releasing features multiple times a day, which puts immense pressure on QA teams. Traditional testing methods - both manual and automation - are increasingly insufficient because they can't handle dynamic changes efficiently.

AI-powered testing enables systems to learn from historical data, identify patterns, and make intelligent decisions without constant human intervention. In 2026, with the rise of microservices architecture, CI/CD pipelines, and real-time deployments, AI in QA is no longer a luxury - it is a necessity.

The Problem With Traditional QA

In real-world applications like e-commerce platforms and subscription-based systems, frequent UI updates create significant challenges. Automation scripts often fail due to minor changes - updated element IDs, layout adjustments. In a subscription billing system, testing involves plan upgrades, downgrades, proration calculations, failed payments, and renewal cycles. Covering all these manually or with static scripts is extremely difficult.

Business Impact

- Increased release delays due to testing bottlenecks

- High maintenance cost for automation scripts

- Reduced confidence in test coverage

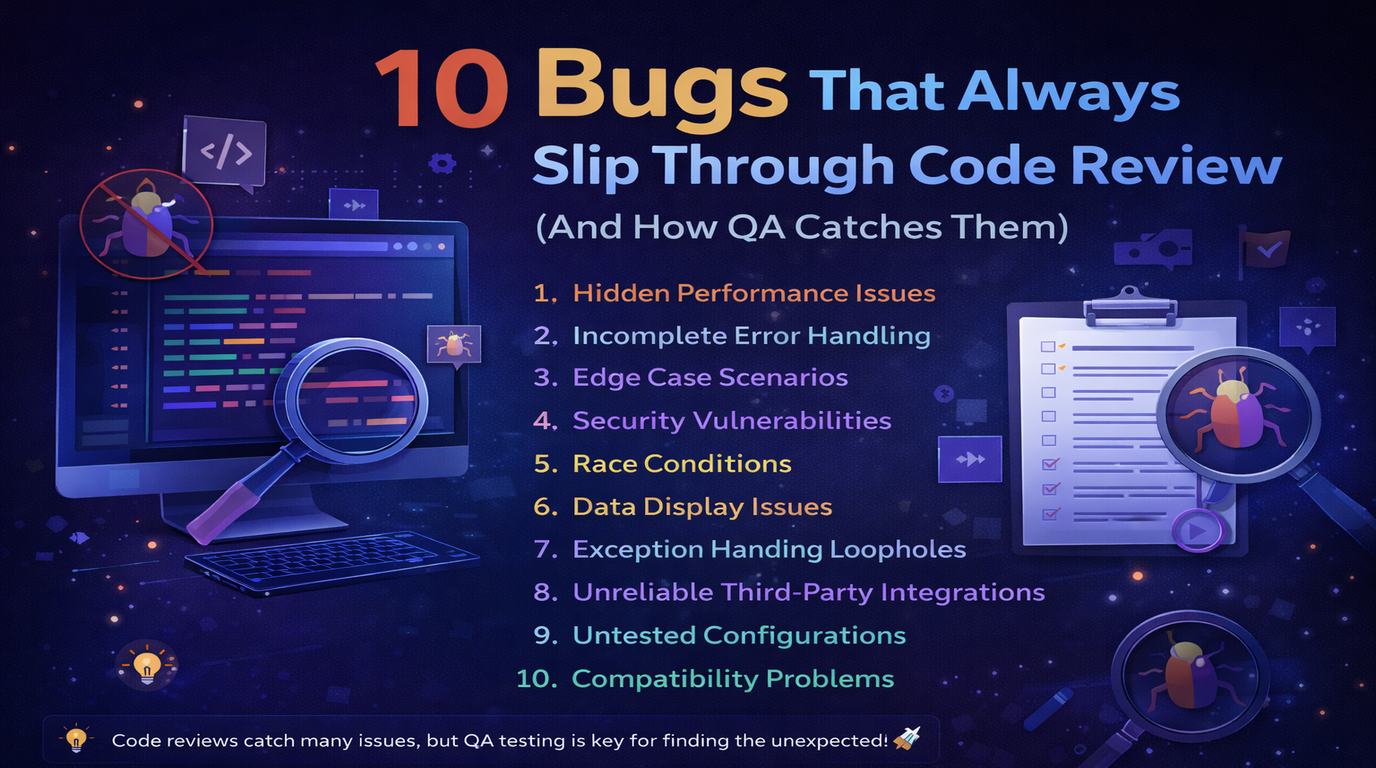

- Poor user experience from undetected bugs

Technical Challenges

- Flaky test cases due to dynamic UI elements

- High dependency on static locators

- Difficulty in managing test data

- Lack of predictive analysis for defects

The AI-Powered QA Framework

AI introduces intelligence into the QA process by enabling systems to adapt, learn, and optimise. A typical AI-powered testing framework includes four layers:

- Test Execution Layer - Executes automated test cases

- AI Engine Layer - Performs analysis, prediction, and optimisation

- Data Layer - Stores logs, historical test data, and user behaviour

- Reporting Layer - Generates insights and recommendations

Self-Healing Automation

When a UI element changes - an ID is updated, a button moves - traditional test scripts break and require manual fixing. AI-powered self-healing detects the change, identifies the new locator using alternative strategies (text, position, visual similarity), and updates the test without human intervention. The test that would have failed overnight now passes automatically.

Smart Test Case Generation

AI analyses user behaviour from production logs and session recordings to understand how real users move through the application. It then generates test cases that reflect actual usage patterns - not just the happy paths developers tend to write. This catches bugs in user journeys that testers wouldn't think to write manually.

Predictive Defect Analysis

By analysing historical defect data - which modules had the most bugs, which types of changes tend to introduce regressions - the AI can flag high-risk areas before testing begins. Instead of running the full regression suite every time, teams prioritise the areas most likely to have broken.

Visual Testing

AI compares UI screenshots and identifies visual regressions - layout shifts, missing elements, colour changes - that functional tests miss entirely. Tools like Applitools use AI to distinguish meaningful visual differences from acceptable rendering variations across browsers and devices.

Tools worth knowing: Testim, Applitools, Functionize, Selenium with AI integrations, Cypress with AI plugins.

A Real Case Study

During our work on a subscription-based product, we encountered significant automation stability issues. Nearly 35% of test cases were failing due to minor UI changes, requiring constant script updates.

What We Did

- Implemented dynamic locator strategies with fallback options

- Introduced AI-based visual validation

- Prioritised test cases using historical failure data

Results

- Reduced maintenance effort by 40%

- Improved test stability by 60%

- Reduced regression testing time by 25%

Lesson learned: Initially, we tried to fully rely on AI without proper data preparation, which led to inaccurate predictions. The fix was adopting a hybrid model combining rule-based and AI-driven testing - better control, better reliability.

Performance Optimisation

AI-driven testing doesn't just improve quality - it improves speed. Intelligent test selection runs only the tests relevant to what changed. Risk-based testing prioritises critical modules. Redundant test cases are identified and removed. The result is faster feedback in CI/CD pipelines without sacrificing coverage.

Conclusion

AI-powered testing transforms QA from a reactive process into a proactive and intelligent system. It reduces manual effort, improves accuracy, and enables faster releases.

- AI reduces maintenance effort on brittle test suites

- Improves test coverage by learning from real user behaviour

- Enables faster release cycles through intelligent test prioritisation

- Enhances overall product quality through predictive defect detection

Businesses adopting AI-driven QA achieve faster time-to-market, reduced costs, and better user experience. If you're planning to upgrade your QA strategy, AI-powered testing is the right step forward.